What AI do you trust the most? Do you even trust it at all? 2 questions that helped shape this week’s chapter. We dive into the gaps we see in the finance world, trust within AI systems, and dare we say, the decline of the resume. Enjoy

Events for You:

[March 18th] - Women Investing Forward: Family Office, Wealth, & Venture

[April 4th] - Vibe FORWARD

[April 10th] - Oracle AI World Tour New York City

[April 27th] - Momentum AI NYC 2026

Trust Is A Product Feature

The race in artificial intelligence has been framed as a contest of intelligence. Which model writes better, which one codes faster, and which system wins the next benchmark. A recent wave of users experimenting with Claude instead of ChatGPT has been described as another chapter in the model wars, but the reasons people are giving tell a different story. The shift was not triggered by a breakthrough in performance or a dramatic new feature. It started with a question of trust.

The moment that sparked the conversation came from a governance decision. Anthropic publicly declined to allow its system to be used for domestic surveillance or autonomous weapons. Around the same time, OpenAI announced a partnership with the Pentagon that included safeguards but still stirred debate. For the past two years, generative AI adoption has moved quickly because the tools felt experimental. People tested them the way they test a new app. They wrote a few prompts, generated a few ideas, and moved on. When a tool feels temporary, trust barely enters the conversation. If it behaves strangely or disappears tomorrow, the damage is small, but that stage is ending.

AI assistants are moving into the center of real work. Once a system begins shaping decisions and workflows, the stakes change. Trust stops being philosophical and becomes operational. This is why the recent switching conversation matters. The ease with which people can now move between AI platforms has quietly lowered the cost of distrust. Prompts can be copied, workflows can be rebuilt, and knowledge bases often sit outside the model itself. The switching process is not frictionless, but it is possible in a way that enterprise software rarely allowed before.

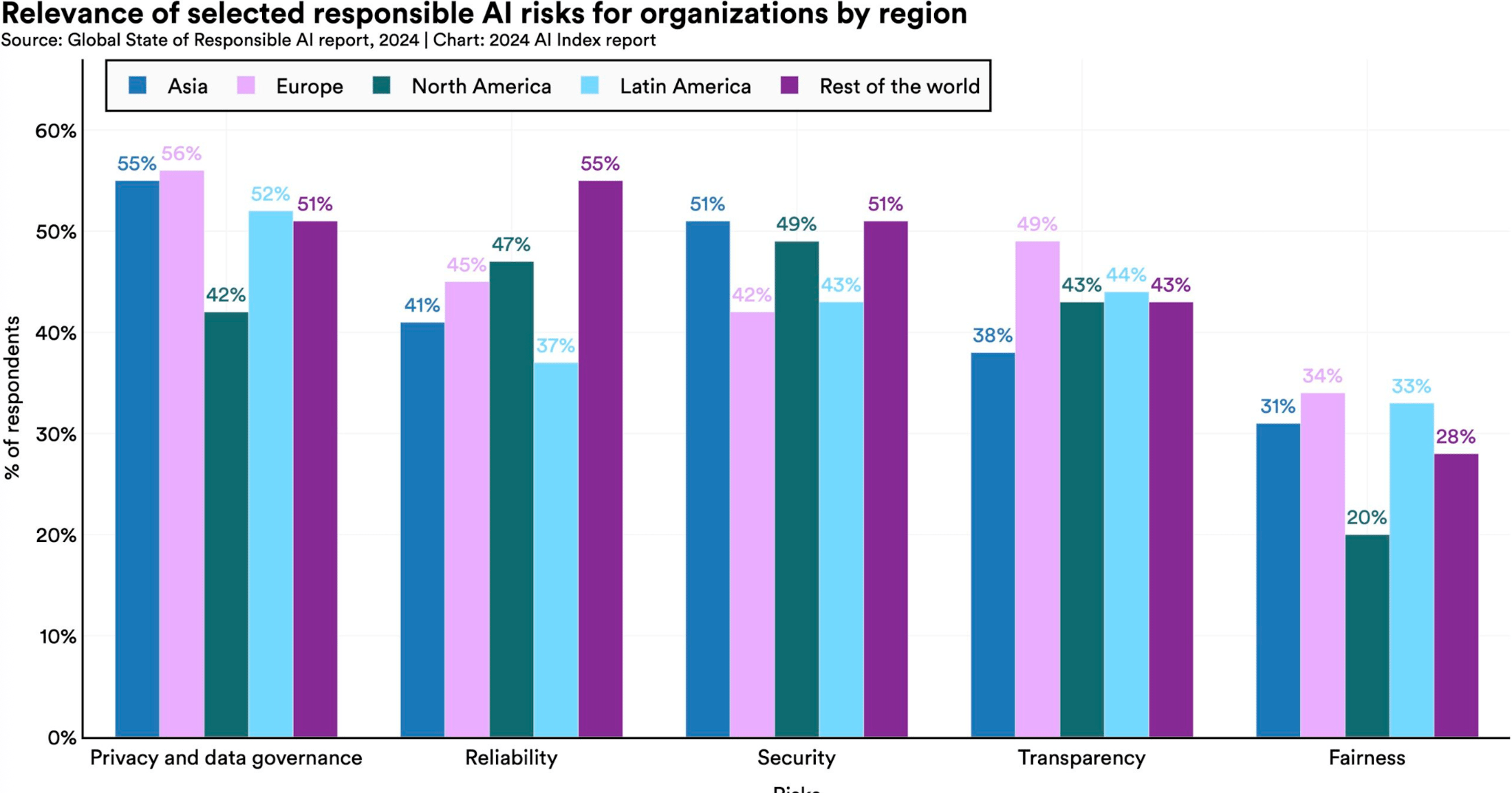

For the companies building these systems, that reality changes the competitive landscape. Model performance still matters, but it is no longer the only factor. Organizations are beginning to evaluate AI platforms the way they evaluate infrastructure. They want to know where their data goes and understand how policies might evolve over time. In other words, they want confidence that the platform will behave predictably long after the excitement of the latest release fades.

Inside companies, this shift is changing how teams deploy AI altogether. Instead of committing to a single assistant, many organizations are spreading their work across multiple models. One system may handle writing. Another may specialize in coding and a third may integrate more comfortably with internal data. Part of that strategy is about performance. Different models have different strengths.

The deeper reason is trust. Relying on a single AI platform means accepting its policies, its pricing decisions, and its long-term direction. A multi-model strategy reduces that risk. If a provider changes course, the organization can shift workloads elsewhere without rebuilding everything from scratch. The pattern should sound familiar. Businesses have spent the past decade learning the same lesson in cloud computing and financial infrastructure. Redundancy protects the system. Flexibility protects the organization. Artificial intelligence is beginning to follow that path.

Was this newsletter forwarded to you? Subscribe here to get weekly insights that help you navigate your career with more clarity and confidence.

Half’s Full View on Tech & IT Jobs, Part 3: Finance

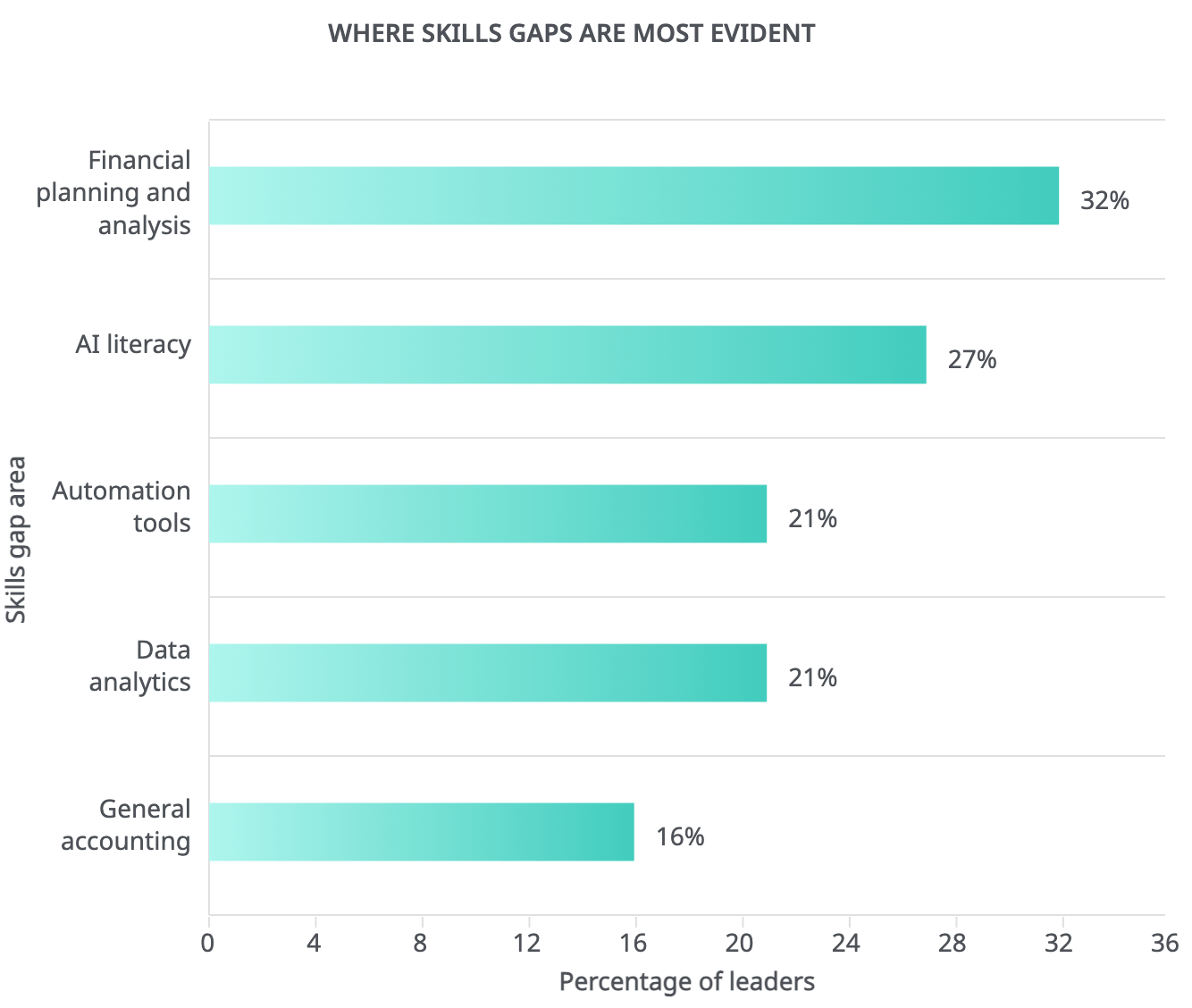

We’re conducting another examination into Robert Half’s 2026 Tech and IT Hiring Trends report and this time the focus is on several professions ranging from finance to legal. Finance and accounting leaders are increasingly noting skills gaps in financial planning and analysis, as well as AI literacy, automation tools, and data analytics.

The report cited the following software proficiencies as key:

Microsoft D365, Oracle NetSuite, Microsoft Power BI, QuickBooks

Python, SAP, SQL, and Workday

Over half (56%) of leaders in finance and accounting planned to increase hiring in the first six months of 2026. Similarly, 54% reported an increase in temp or contract hiring in the same timeframe. Robert Half noted that some roles have been growing at above-average historical rates over the past year, such as:

So what’s the big takeaway for finance and accounting professionals? It’s that the roadmap for promotion or pivot into finance and accounting in the age of AI has been laid out for you. The roadmap includes a mastery of finance and accounting tools from Microsoft, Workday, and Oracle. It involves your being able to automate workflows, convey your advanced AI literacy, and using AI tools to be elite at financial planning and analysis.

Resumes Are Losing Their Value

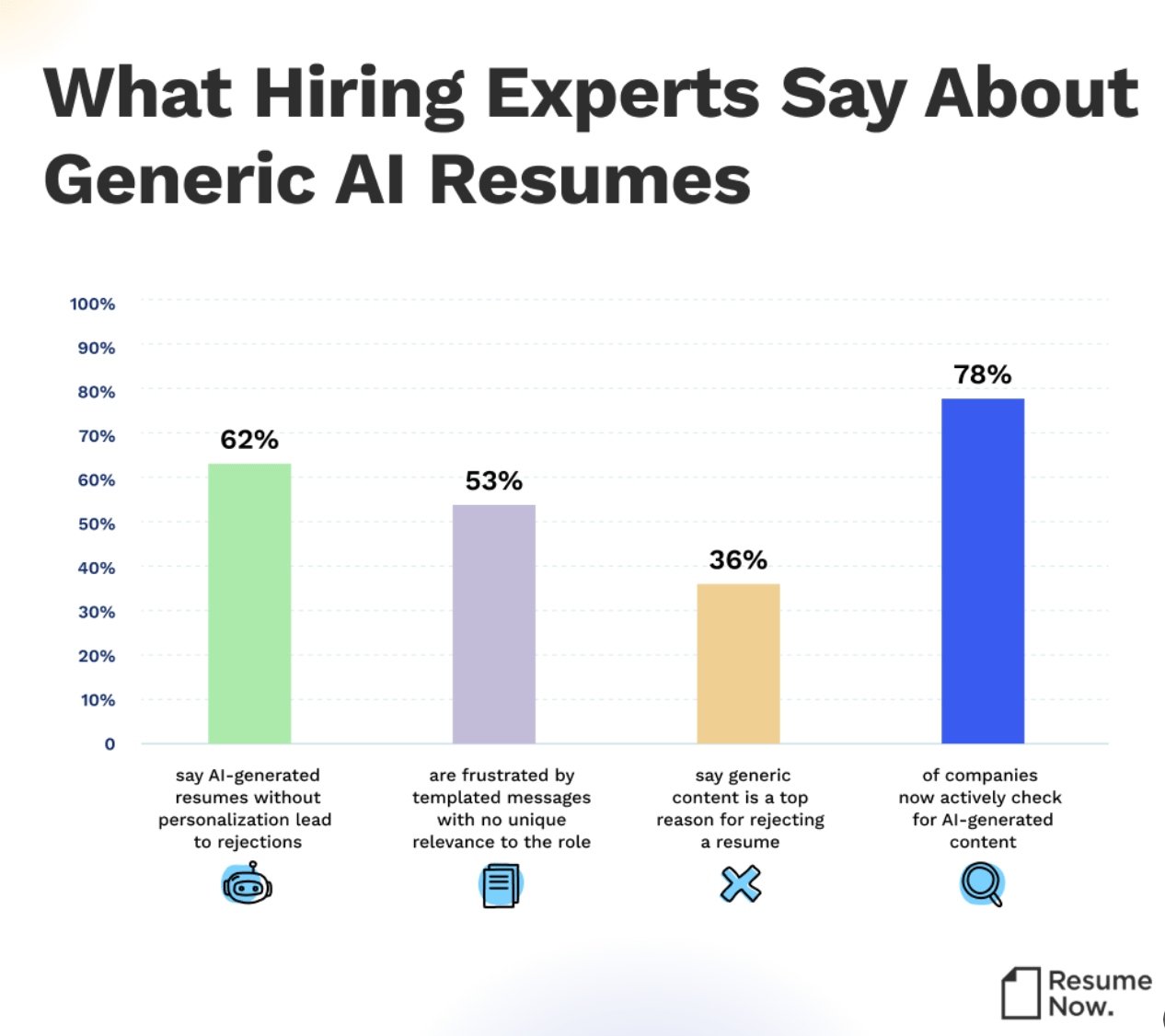

For most of modern hiring, the resume has been the gatekeeper. One document decides whether a recruiter ever speaks to you. It summarizes your work history, compresses your skills into bullet points, and signals whether you are worth the next step. The entire hiring funnel begins there. That system worked when resumes were harder to manufacture and when application volume was manageable. Neither of those conditions exist anymore.

Zapier recently ran a hiring experiment that shows how quickly this model is starting to change. The company piloted an AI-led recruiter screening process to deal with a problem that many talent teams now face. When Zapier opens a role, thousands of applications often arrive within the first two days. At first, the company assumed the main problem was fraud. Their internal data showed that roughly 30 percent of applications raised some form of concern. Some submissions came from bots or some involved fake identities. Others were candidates using AI tools to generate polished resumes and cover letters that looked strong but did not always reflect real experience.

What Zapier uncovered was interesting. Recruiters simply did not have enough time to talk to everyone worth evaluating. Good candidates were slipping through the cracks because the system depended too heavily on a document that no longer carried a reliable signal. So the company tried something different. Instead of relying on resumes to decide who deserved a recruiter screen, Zapier invited many applicants to complete a short AI-led interview using a platform built by Ezra AI Labs. Candidates who opted in participated in a structured conversation that lasted about fifteen to twenty minutes. They could complete it whenever they wanted, from any time zone.

The system recorded the interview and produced a transcript, short video clips, and a summary of responses. Recruiters then reviewed those materials and decided whether a candidate should move forward. The AI did not make hiring decisions. It simply collected structured information so recruiters could evaluate candidates faster.

The results changed how the team thought about early-stage hiring:

Before the pilot, it took roughly eight days for an applicant to move from initial review to a completed recruiter screen. With the AI interviews in place, that window dropped to about 2.75 days.

Recruiters recovered around 84 hours of time during the test because reviewing an interview transcript and summary requires far less coordination than scheduling a live call.

Zapier screened 5x more applicants per role. When the company ran the process with engineering candidates, nearly a third of the people who advanced to hiring managers were individuals recruiters would not have had time to speak with under the old model.

Some of the strongest candidates did not have the most polished resumes. They were the ones who performed well once they were asked real questions.

Candidate reaction was better than expected. The interviews received an average rating of 4.5 out of five stars, and almost everyone who started the process finished it.

Many candidates appreciated being able to explain their thinking instead of relying entirely on a resume that may or may not capture their actual strengths. What Zapier tested points to a quiet shift in hiring. As AI tools make it easier to produce flawless resumes, companies are starting to look elsewhere for signals. A resume can tell you where someone worked. It is getting worse at telling you how someone actually thinks.